AI Auditing Vibe Code

Our multi-model AI code audit (Claude Opus 5.5 & GPT-5.2) improved things, but a human engineer still found basic, overlooked issues. The Spaghetti Code Limit (SCL) still lingers on the horizon, proving AI is a powerful tool, but not a replacement for a human expert.

Many vibe coders fear building an elegant house of cards: code that looks functional but is destined to collapse. That concern drove us to conduct a deep, multi-model AI audit of the Burrow project.

The Guardrails Have Helped, But We Need More

Determining how good AI is at developing software is the entire thesis of this blog, so this concern has weighed heavily on me since the beginning. In prior posts, I've talked about some best practices we've implemented to try and maintain quality as we go:

- Create domain-specific AI agents to follow best practices as you build (the how).

- Conduct periodic code reviews to ensure those practices are followed.

- Use PRDs to provide context for what is intended (the what and why) and include them in the periodic code review.

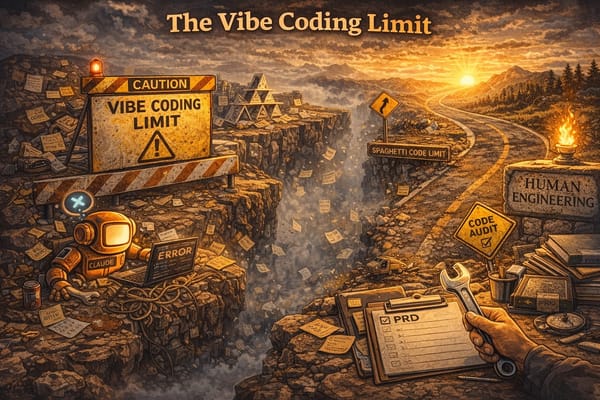

With all that, I could still tell I was pushing against the Spaghetti Code Limit (SCL) - the threshold where code complexity and cascading issues make feature implementation exponentially slower.

The next idea to push the SLC a little further off was to conduct a multi-model deep code review with specific guidelines derived from traditional software development practices.

The Deep AI Code Audit

The premise was simple. Imagine we had a very skilled senior-level engineer (human) conduct a deep audit of the project to determine whether it was built by real, talented engineers or the product of vibe coding. Fix whatever it found lacking and level set on a firm foundation to move forward.

The plan:

- Phase 1: Meta-Prompt Generation: Use a sequence of AIs (ChatGPT to Replit/Claude) to engineer an initial, highly-rigorous audit prompt. The prompt would be stored in quarterly-audit.md in the hopes it would be reusable for ongoing deep-dive audits.

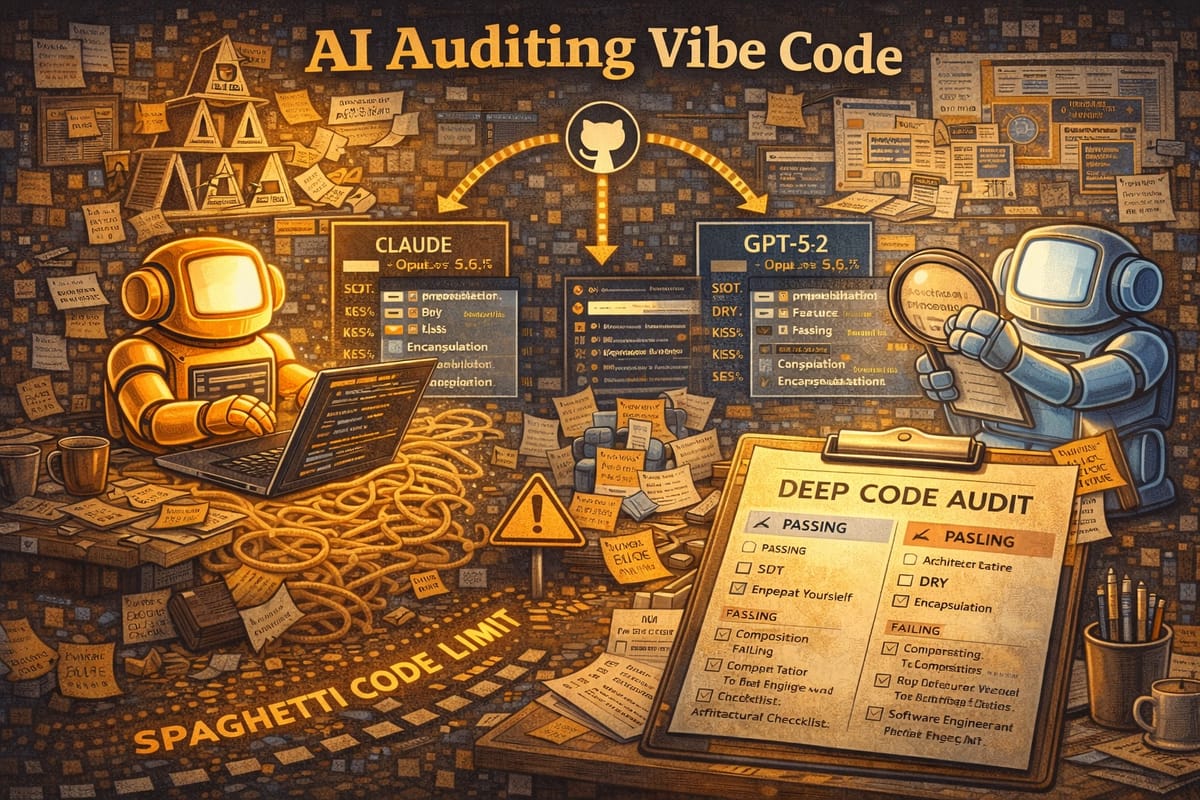

- Phase 2: Dual-Model Execution: Run the final prompt simultaneously on two leading models (Claude Opus 4.5 and OpenAI GPT-5.2) to ensure a comprehensive, multi-perspective analysis.

- Phase 3: Human Synthesis & Fix: Evaluate the combined AI recommendations and perform the final, human-led cleanup to establish a "firm foundation."

Initial Prompt

You are a highly skilled full-stack engineer who has been hired to conduct a thorough review of this project. You will perform a rigorous analysis of the entire project with the end goal of producing clean, robust, well-architected code while maintaining existing INTENDED functionality. This will form a high-quality foundation that would easily pass the scrutiny of other skilled full-stack engineers in a code review.

Your task goes beyond a basic code review and is intended to be systematic and complete. You have access to human peers who can answer questions, help make critical decisions, and test the results of your work.

There are 3 high-level phases to this project:

- Familiarize yourself with the entire project. Analyze the project objectives and structure. Write an expanded prompt to give to a model to elicit the best possible plan.

- Use the prompt from (1) to develop the plan.

- Execute the plan – This step will involve many sub-steps and will take most of the time.

The plan should include:

- What kinds of reviews should be done? Design patterns to audit? Common anti-patterns to check for? Documentation thoroughness and accuracy? Best practices? Common pitfalls?

- How to break up the review. By file? By feature?

- The sequence of events – potentially many stages to the review – including concrete milestones. At each milestone, documentation should be updated and the code will be tested for regressions.

For now, please go ahead with phase 1: Analyze the full code base, including documentation, and produce a prompt that will lead into phase 2.

Note: Use the supporting documentation in prds/ for context and agents/ for best practices, but consider them guidelines, not the absolute truth. Highlighting discrepancies in these files from what you find and suggesting updates to these files should be part of the review.

We followed the plan, and the results were... fine. It identified a variety of issues that needed to be addressed, but not to the depth we had hoped. To try to add more rigor, we explicitly added this section to the quarterly-audit.md and found a little bit more, but not a lot.

Manual additions to the review prompt

Analyze:

- Layering boundaries (UI → hooks → data → server → DB)

- Module responsibilities and coupling

- Circular imports or dependency direction violations

- Configuration sprawl and environment handling

- Patterns blocking future feature development

- Repeated logic that should be abstracted

- "God files" or overly large components

Architectural Principles Checklist: Evaluate adherence to these core principles:

Core Principles

- Single Source of Truth (SSOT) - Is each piece of state managed in exactly one authoritative location? No competing stores or duplicated state?

- Don't Repeat Yourself (DRY) - Are shared utilities, hooks, and components used instead of copy-paste? Is formatting/validation logic centralized?

- Redundant/Dead Code - Are there deprecated functions still present? Unused exports? Orphaned routes or components?

- Encapsulation - Are implementation details hidden behind clean interfaces? Do modules expose minimal public APIs?

- Component Isolation - Are components loosely coupled with clear boundaries? Can components be tested/modified independently?

React/React Native Specific Principles

- Separation of Concerns - Is business logic separated from presentation? Are data fetching, state management, and UI rendering in appropriate layers?

- Composition over Inheritance - Are components built through composition (props, children, hooks) rather than class hierarchies?

- Pure Functions / Immutability - Are state updates immutable? Do utility functions avoid side effects? Are React state updates done correctly (no direct mutation)?

- Fail Fast - Are errors surfaced early via validation, type checking, and error boundaries rather than silently ignored?

General Software Engineering Principles

- KISS (Keep it Simple Stupid) - Is the code as simple as possible? No over-engineering, premature optimization, or unnecessary abstractions?

- YAGNI (You Aren't Gonna Need It) - Are there features or abstractions built "just in case" that aren't actually used? Unused parameters, dead feature flags?

- Law of Demeter - Do components/modules only interact with their immediate dependencies? Avoids deep prop drilling, excessive context coupling, or reaching through objects.

- Principle of Least Surprise - Does code behave as expected? Are naming conventions consistent and intuitive? Do similar things work similarly?

- Defensive Programming - Are edge cases handled? Null checks, boundary conditions, invalid inputs, empty arrays?

- Convention over Configuration - Are sensible defaults used to reduce boilerplate? Does the codebase follow established framework conventions?

Conclusion

The result was better code, but a limited scope, deep-dive into the actual code by a skilled engineer - my teammate Christian - revealed a lot of very basic issues that were overlooked.

The combined intelligence of two leading data models - Claude Opus 5.5 and OpenAI GPT-5.2 - improved things, but did NOT result in the type of rigor we had hoped. The SCL still lingers on the horizon.