Beyond Vibe Coding: Putting the Auditor in Charge

Stop asking your AI to "just review the code." The shift from "model-driven" to "tool-driven" control flow - putting the process in charge, not the agent - dramatically improves reliability, reduces technical debt, and scrubs thousands of issues. Beyond "vibe coding," welcome engineered AI systems.

In Beyond Vibe Coding: Self-Scaffolding a Code Auditor, I described a simple idea: give a coding agent a structured review tool instead of asking it to “just review the code.”

The difference was dramatic.

There’s a night-and-day gap between:

- A single prompt (even a very detailed one), and

- A structured review process broken into many guided steps.

Why? Because a single prompt quietly biases the agent. It makes assumptions about how much effort to spend, how deeply to reason, and when to stop. Even a long instruction doesn’t fully override those defaults.

A structured tool changes the economics. It decomposes the review into many smaller prompts. That forces the model to spend more tokens, examine more surface area, and reason more deliberately. The result is simply better code reviews.

But that was only the first step.

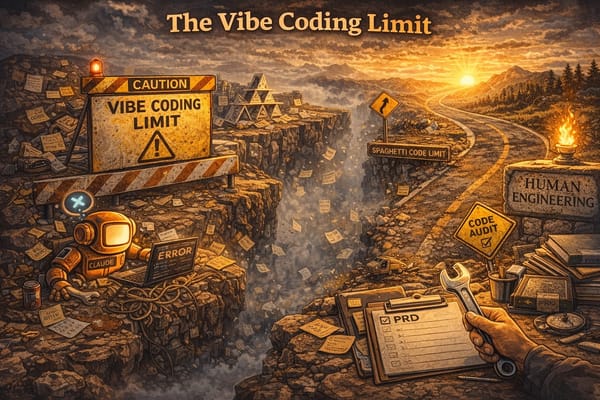

The Limits of a Model-Driven Review

The original approach was still “model-driven.” The agent remained the top-level decision maker for the entire review.

That flexibility is useful. It’s also risky.

In practice, the agent sometimes:

- Skipped review steps

- Shortened analysis near the end of its context window

- Rushed to completion when “anxious” about running out of tokens

Even strong language in the prompt didn’t reliably prevent this behavior.

The core issue wasn’t the model. It was control flow. The agent was in charge. So we flipped it.

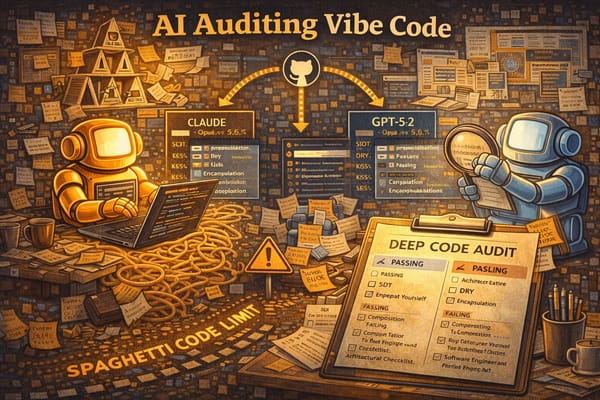

Tool-Driven Review: The Tool Owns the Process

Instead of letting the agent orchestrate itself, we put the tool in control at the top level.

That shift unlocks several important capabilities:

- Spin up fit-for-purpose agents for each task

- Enforce deterministic requirements (run linters, type checkers, unit tests after changes)

- Manage context windows per agent

- Prevent skipped steps by structuring execution externally

In other words: the LLM becomes a worker, not a manager.

We implemented this using the Claude Agent SDK to simplify orchestration and model interaction.

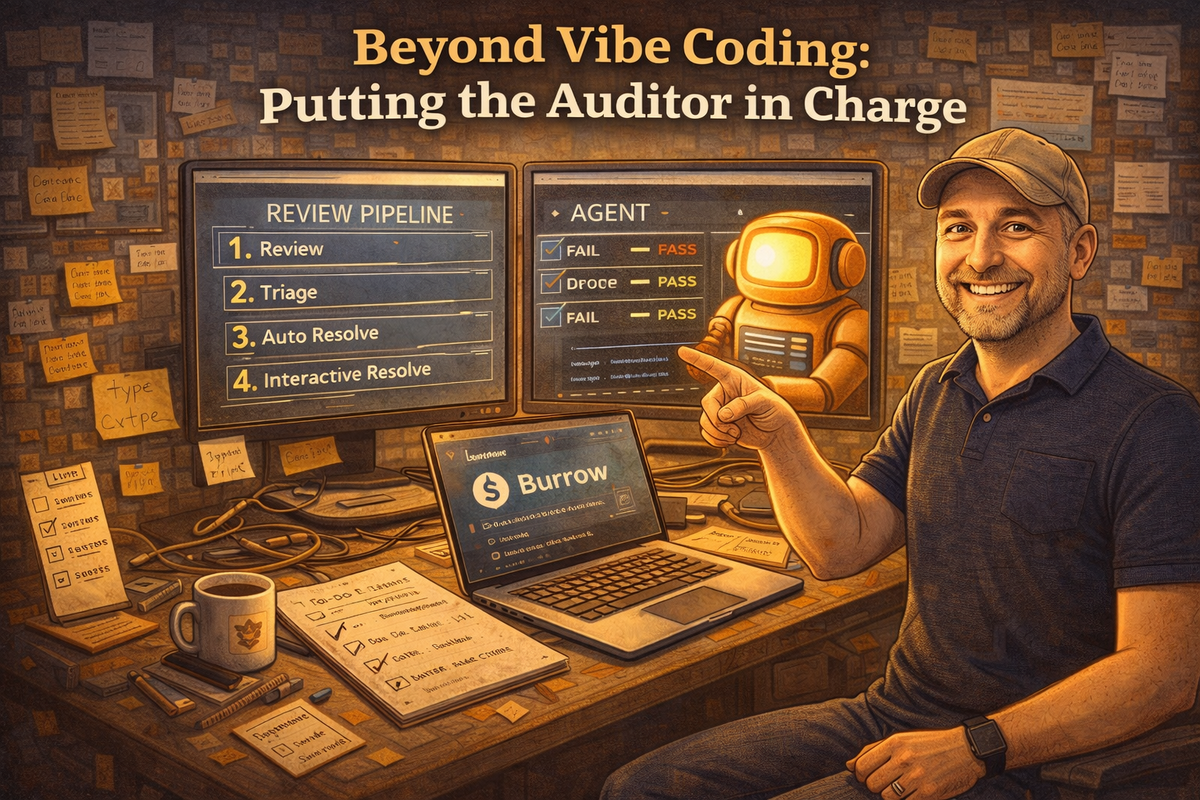

The Four-Phase Review Pipeline

The new reviewer tool runs in four main phases.

1. Review

- Identify stale files

- Queue them for review

- Spin up multiple parallel agents pulling from a shared queue

- Restrict them to read-only analysis

No edits are allowed in this phase. The goal is pure signal gathering.

2. Triage

The tool walks the user through the findings. Each issue is categorized as:

- Ignore – Not worth addressing

- Auto – Safe to resolve automatically, perhaps with a single line of guidance.

- Interactive – Requires human input

This stage turns a wall of findings into a structured plan of attack.

3. Auto Resolve

For all findings marked Auto, the agent resolves them independently.

Because the tool is in charge, it can:

- Run validation after each resolution

- Ensure lint/type/test checks pass

- Isolate changes per issue

This phase is where the bulk cleanup happens.

4. Interactive Resolve

For issues requiring nuance, the user and agent collaborate.

Each finding becomes a focused conversation:

- Clarify intent

- Decide on tradeoffs

- Apply changes

This keeps humans involved where judgment matters most.

What Changed in Practice

This tool proved highly effective in real-world use.

We used it to scrub thousands of issues across multiple codebases.

Yes, it takes time to review and triage everything. There’s no escaping that. But the payoff is substantial:

- Cleaner code

- Reduced technical debt

- Stronger test coverage

- More consistent style

- Fewer lurking edge-case bugs

If a project is intended to live longer than a single experiment, the investment is absolutely worth it.

The Bigger Lesson

The most important takeaway isn’t about linting or orchestration. It’s about architecture.

Single-prompt workflows rely on the model’s internal discipline.

Tool-driven workflows impose external discipline.

When building AI coding systems, the question isn’t just “How smart is the model?”

It’s “Who’s in charge?”

When the tool owns the process and the model executes bounded tasks, reliability improves dramatically.

That’s the difference between vibe coding and engineered AI systems.