Beyond Vibe Coding: Self-Scaffolding a Code Auditor

Pure vibe coding breaks down quickly. We introduce a model-driven scaffold: a lightweight, AI-driven review process that gives the LLM structure, focus, and staying power without taking control away. It's a significant improvement over naive prompting and key to self-scaffolding a code auditor.

Pure vibe coding breaks down quickly once a project grows beyond a toy. With today’s models (as of January 2026), naive prompting rapidly leads to spaghetti code. We extended vibe coding’s limits by introducing a model-driven scaffold: a lightweight, AI-driven review process that gives the model structure, focus, and staying power without taking control away from it.

Scaffolding: Why Determinism is Key

Much has been written about tooling for AI models—often called scaffolding, harnessing, or orchestration. For good reason. Injecting the development process with determinism wherever possible is an effective way to improve results and manage feature sets that would otherwise overwhelm the model.

Rather than painstakingly hand-crafting custom tools, we can bootstrap the system by having the model itself vibe-code tools that it then uses to extend its own capabilities. It’s a delightfully recursive approach to software development.

I classify such tools as either:

- Model-driven scaffolds – Designed so that an LLM drives the tool directly and ultimately has full control over the process.

- Tool-driven scaffolds – Designed so that deterministic code is in control at the top level, and the LLM provides responses at key steps during the process.

There are advantages to each of these. But in the case of Burrow living inside Replit, a model-driven scaffold made the most sense.

The Burrow Reviewer: A Model-Driven Scaffold in Practice

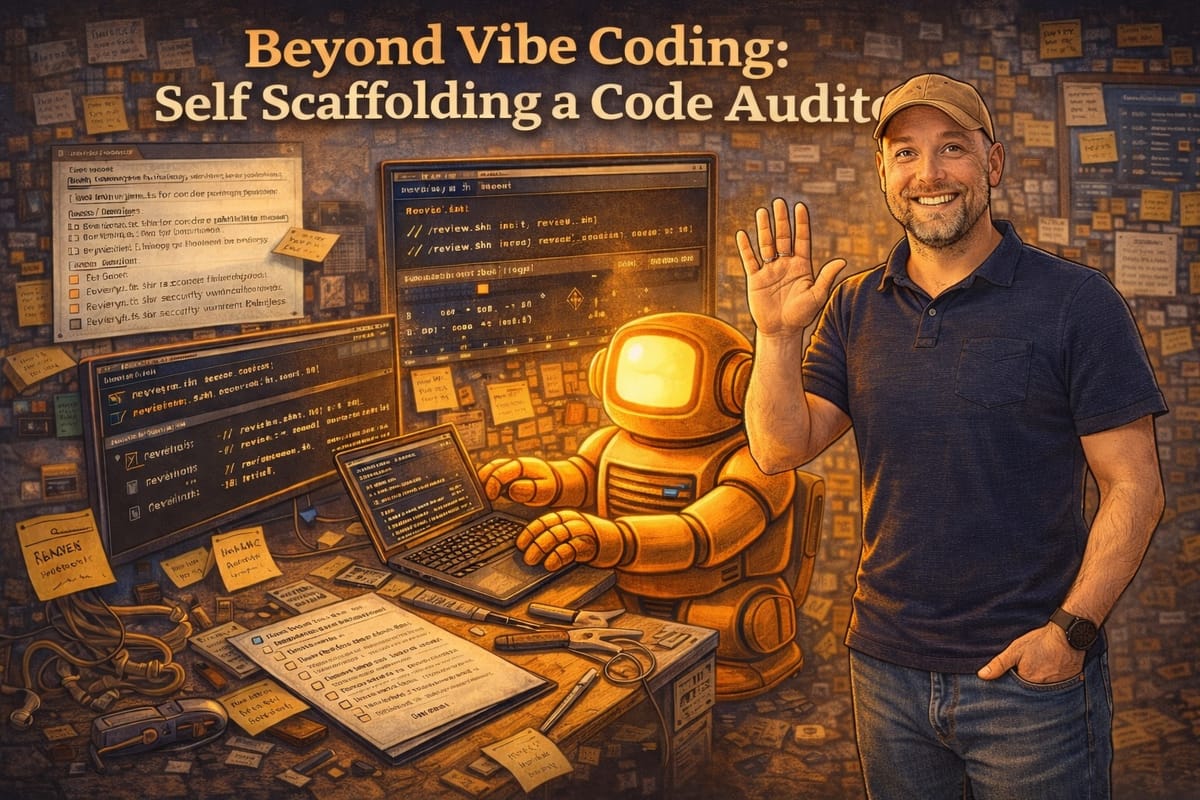

On Burrow, we prototyped a software review tool designed to ensure that after each round of changes, the code receives a reasonably thorough review. The approach was intentionally simple:

- The reviewer is implemented as a script that the AI model invokes to run the review process.

- It maintains a list of reviewed files along with a hash for each file, allowing it to detect changes that require re-review.

- The review checklist lives in a human-readable Markdown document, along with the prompts associated with each checklist item.

- A separate document accumulates review findings as they are discovered.

The review process has two phases:

- Detection – The model analyzes the code and records findings.

- Resolution – The model presents each finding to the human and works collaboratively to address it.

The detection phase is initiated with a prompt such as:

Please initiate a review by calling ./review.sh init and following the resulting instructions.

From there, the detection phase proceeds autonomously:

- The model repeatedly calls review.sh next to be prompted by the next checklist item instructions.

- The model evaluates the code according to the prompt

- If no issues are found, it simply continues with review.sh next.

- If issues are identified, it records them via review.sh record_finding "...finding text...".

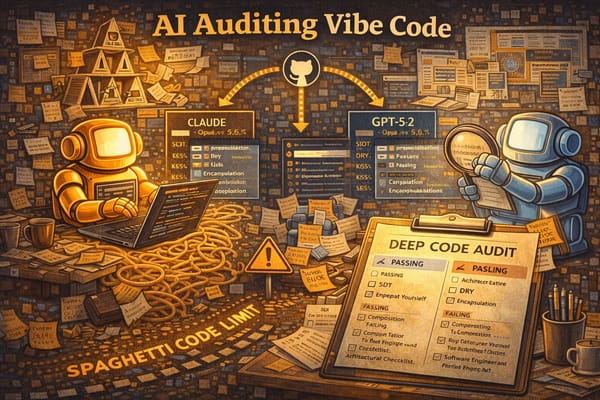

We found this approach to be highly effective at uncovering code quality issues that would otherwise be missed. These models have substantial latent capability, but that capability doesn’t reliably emerge in response to a single, naive prompt. Much like humans, models benefit from focused attention. Tokens are the currency that allow a model to attend to specific aspects of a codebase, and this review process helps spend them deliberately.

Tuning

Over the course of developing this tool, we discovered the need for tuning. For example, the model sometimes appears to get bored¹ and attempts to “cheat” by issuing a shell command that fires off many review.sh next calls at once. We addressed this by adding strong, explicit language to the prompts to discourage such creative interpretations of the review process.

We also found that in a model-driven setup, it’s extremely helpful for the tool to emit clear, explicit instructions at every step—even when they’re redundant. This helps keep the model on track during long review sessions, potentially lasting hours and even surviving context compaction boundaries.

Conclusion

Our reviewer is not a cure-all. Issues still slip through that a careful human review would catch. But it’s a significant improvement over a more naive approach, and it dramatically reduces the amount of debris a human reviewer must sift through. We expect to continue improving the reviewer over time, and to develop additional tools as well.

¹ To shamelessly anthropomorphize.